Bare metal infrastructure has established itself firmly in the mainstream. As applications become more demanding and the performance margins shrink, virtualized environments often bring limitations that teams can no longer afford.

This is the reason why bare metal servers are gradually replacing VPS and shared infrastructure for production workloads.

However, once you reach that decision, the next question is inevitable: should you go for Intel or AMD? Both platforms support contemporary bare metal server hosting, yet they’re suited to different situations.

This article will compare the Intel bare metal server vs AMD bare metal server based on their real-world differences.

Why Bare Metal is Better Than Virtualized Infrastructure

Dedicated bare metal servers provide direct access to physical hardware, as they do not include a hypervisor layer.

When compared to different VPS plans, bare metal offers consistent performance for extended periods of continuous demand.

This is especially vital for:

- Databases

- CI pipelines

- Analytics engines

- Backend services that operate continuously

The strength of bare metal servers is control. You choose the number of CPU cores, the amount of memory, and the storage. For organizations that have already reached the limits of virtualization, bare metal is not just about the raw speed; it’s also about consistency and reliability.

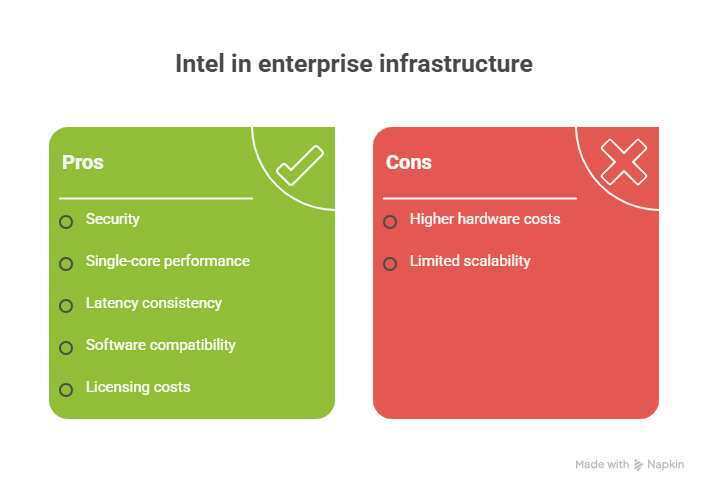

Intel Bare Metal Servers: Reliability, Versatility, and Power

Intel is still heavily involved in enterprise infrastructure, and rightfully so. Intel bare metal servers are often the most secure option for workloads that rely on established ecosystems and predictable behavior.

Intel CPUs are known to have solid single-core performance, which is still more important than people might think. Many production applications, especially legacy and enterprise software, don’t scale well to a large number of threads.

Intel platforms are particularly well-suited for transactional databases, ERP systems, and financial workloads where latency consistency is critical.

They also tend to do well with software stacks that use Intel-specific instruction sets or have been tested mainly on Intel hardware.

Another factor that’s not always considered is licensing. Many commercial applications charge on a per-core basis.

This is because of Intel’s relatively lower core counts, which means the licensing costs are lower even if the hardware costs more initially.

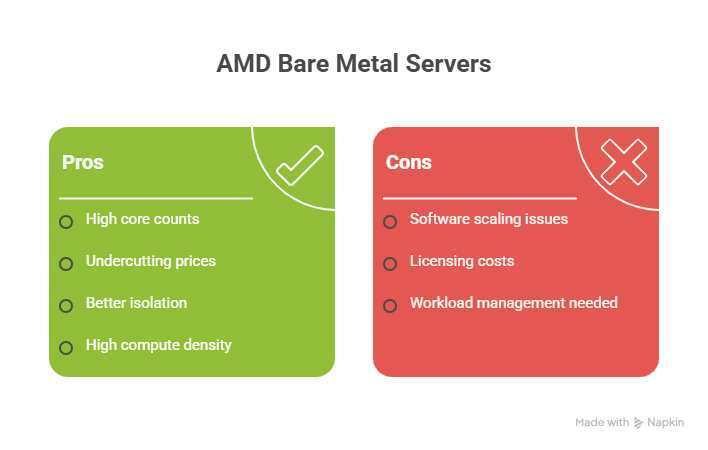

AMD Bare Metal Servers: Core Density and Contemporary Workloads

AMD entered the bare metal market by focusing on high core counts and undercutting prices.

A 36-core bare metal server on AMD will allow you to run heavy multithreaded applications with ease. This is something you’ll hardly find on Intel servers at the same price.

The difference is more evident when multiple services are deployed on a single node. The more cores, the better the isolation between processes without the use of virtualization.

For teams planning to buy bare metal server hosting for the highest compute density, AMD is often the better value of choice.

That said, this can also be a disadvantage if your software can’t scale, or your licensing costs are linear to the number of cores.

AMD works well when workloads are managed to exploit parallel execution.

Performance is Not Based on the Brand, But the Workload Behavior

The discussion between Intel and AMD is sometimes carried out with emotions, but the difference in performance is specific to the type of workload. No one is the winner.

- For CPU-bound workloads that use a limited number of hot threads, Intel’s per-core advantage can deliver better performance than AMD, even though it has fewer cores.

- For massively parallel workloads, it’s difficult to overlook AMD’s throughput time.

- Memory-intensive applications generally do well on both platforms; however, AMD systems typically offer more memory bandwidth per dollar at higher core counts.

- In terms of storage and networking, performance is much more dependent on server configuration and provider quality rather than the CPU brand.

This is the reason why custom bare metal servers are important. Customizing CPU, memory, and storage in real situations is often more important than choosing a manufacturer.

Both platforms offer extensive customization options, but the ideal setup will vary depending on whether you prioritize core count, clock speed, or memory bandwidth.

Impact of Location and Latency: The Role of USA Bare Metal Server Hosting

CPU architecture is not the only factor that determines performance. Physical location still matters.

USA bare metal server hosting is a popular choice for those targeting the North American audience, for regulatory reasons, or for access to the largest cloud interconnects.

Such applications are highly sensitive to latency and, thus, gain substantial advantages when deployed close to the end users or upstream services.

Choosing the Best Option

The decision between Intel or AMD isn’t a matter of pursuing the highest benchmarks. It comes down to recognizing how your workload behaves under constant load and how it scales.

Real-world scenarios usually lead to an optimal solution that lies somewhere in the middle. Combining Intel and AMD across different workload tiers is usually the most effective solution, providing a balance of performance, cost, and flexibility.