During preparation for investments, audits, or certifications, attention to cybersecurity increases. Investors, auditors, and certification bodies expect the company to be able to confirm the technical level of protection of its assets. In this context, a pentest functions as a tool that helps eliminate “blind spots” before official inspections and avoid unpleasant surprises that can cost money, time, and reputation.

The benefits of a pentest for an audit

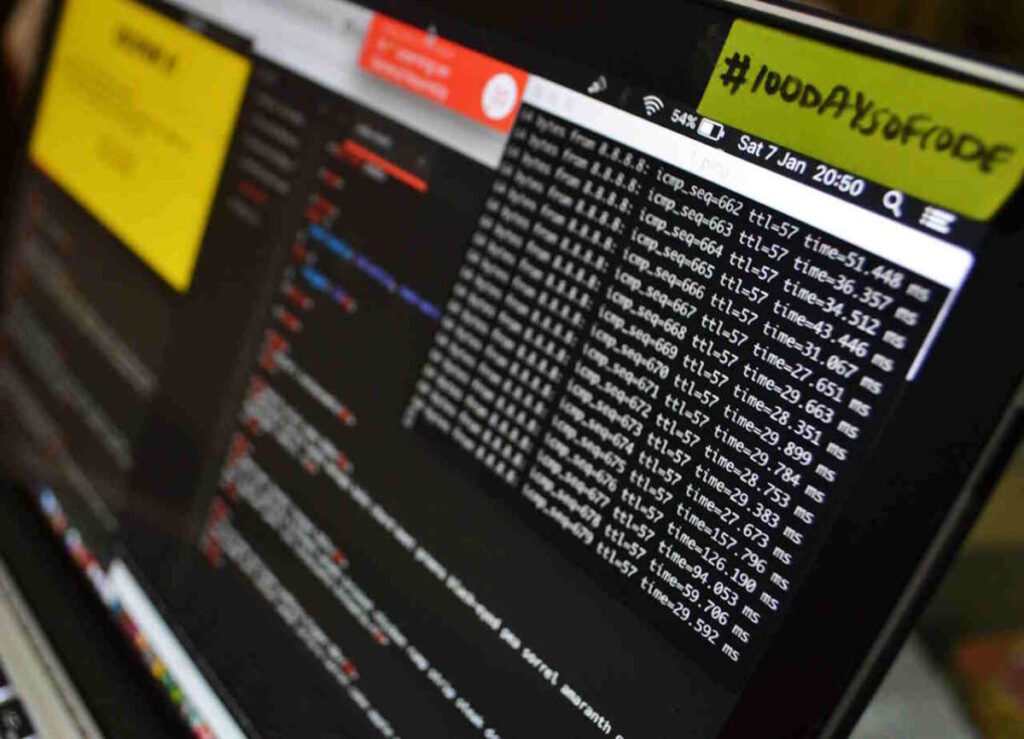

A pentest is a practical security test during which specialists simulate the actions of real hackers in order to identify potential entry points for a cyberattack. Preparation for an audit or investment influences the focus of penetration testing – it defines the perimeter that will be assessed by an external party.

A pentest helps determine how well protected the critical components are – those of interest to auditors, investors, or regulators. It is a technical assessment of real risks – it is important for a company to learn about vulnerabilities before due diligence or a compliance check.

A pentest report demonstrates a responsible approach and transparency to investors, auditors, and consultants. Depending on the objective, its structure may vary: investors are interested in the impact of identified risks, while auditors focus on comparing the results with the requirements.

Typical issues, such as incorrect network segmentation, excessive access, critical vulnerabilities in web applications, leaks of tokens or keys, weak environment isolation, can delay the audit, reduce the company’s valuation, or even cause an investor to withdraw.

Who should perform the pentest?

For assessments before certifications and audits, it is important that the testing be performed by external experts, not employees who developed the product or administer the infrastructure. This eliminates the risk of a conflict of interest and ensures objectivity.

ISO 27001, SOC 2, and PCI DSS standards formulate independence requirements differently, but the essence is the same: an external provider inspires more trust. For PCI DSS, an external pentest is a direct requirement. For SOC 2 and ISO, it is a best practice that significantly improves audit results.

Auditors and investors value evidence, meaning not just the fact that a pentest was conducted, but also its quality, the qualifications of the testers, their competencies, and their independence from the object of testing. Therefore, to meet regulatory requirements and confirm the reliability of their assets, companies turn to specialized teams like Datami, which have experience with various standards and can deliver results that truly matter during external evaluations.

Pentest as preparation for external audits and certification

- Although ISO 27001 does not explicitly require a pentest, it helps confirm the implementation of technical controls and becomes part of the risk assessment process – a mandatory element of the standard. Essentially, it is a “trial exam” that allows vulnerabilities to be addressed before external auditors arrive and helps prepare artifacts that demonstrate system maturity.

- In PCI DSS, the role of the pentest is clearly regulated: both external and internal penetration testing must be conducted within the defined perimeter. All components that store or process payment card data are tested. This is not just a formality – the vulnerabilities identified significantly reduce remediation costs and accelerate certification.

- For SOC 2, pentest results are among the most convincing pieces of evidence of effective Security Controls. Although a pentest is not a mandatory requirement, it significantly reduces the risk of receiving a “qualified opinion.” Therefore, auditors view companies that demonstrate care for their cybersecurity positively.

Benefit: Why it’s cheaper to discover vulnerabilities early

The cost of fixing vulnerabilities after an audit is always higher than before it, as risks of fines, delays, investment pauses, and reputational losses are added. A pentest helps avoid such additional expenses and situations where the audit stops due to critical issues that could have been resolved much earlier.

When exactly to conduct a Pentest

The best moment for penetration testing is before the final stage of negotiations with investors or 2–3 months before certification, to have time for remediation. During the audit, critical vulnerabilities may be discovered that require significant changes or system upgrades.

After resolving risks, it is advisable to conduct a retest to confirm that the issues have truly been fixed and the environment is ready for an audit or investment review. The Datami team, for example, provides a free retest in such cases (you can learn more on the website).

Conclusion

A pentest is more than just a technical procedure. It is a tool of trust that strengthens the company’s position before any external assessments and helps avoid negative consequences of regulatory audits.

High-quality independent testing not only reduces risks but also increases the chances of successful investments and certification.

If your company needs to assess its level of security before an audit or prepare for certification, Datami experts will conduct a pentest, provide a security assessment report with recommendations for vulnerability remediation, and, if needed, offer a free retest.